StarFive VisionFive 2

This article has issues with flow and converging the original article's mission. Please hold off from future merges until a clean up has been completed.

This page describes several methods for installing Gentoo on the StarFive VisionFive 2.

Introduction

This page aims to provide a comprehensive introduction to the various methods of installing Gentoo onto Embedded hardware using the VisionFive 2 as an example device.

The majority of the instructions here are not specific to the VisionFive 2 and may be applied to other devices with similar hardware. Please apply some common sense when adapting these instructions for other devices and do not blindly copy and paste commands without understanding what they do.

It should also be noted that the processes described hereafter are not always the most efficient in terms of commands used, and multiple commands may be used with, for example, environment variables that would typically be exported, or repeated additional options where a wrapper would be useful. This is intentional as it is intended to be a learning experience for the reader.

This article is intended to supplement the Embedded Handbook.

Methods to compile packages

There are several different ways to approach building a rootfs, this article will try to incorporate two of them: utilizing a Crossdev environment and using a chroot to build packages with QEMU Userspace emulation.

Sections that are solely meant for Crossdev users will be denoted with CROSSDEV: while sections that are solely meant for QEMU users will be denoted with QEMU: prepended to the name.

Hardware

For a detailed overview of the StarFive hardware, please visit StarFive_VisionFive_2/Hardware

Boot sequence

The boot sequence of a typical RISC-V device is as follows:

- When the SoC is powered on, the CPU fetches instructions beginning at address 0x0, where is the BootROM (BROM AKA Primary Program Loader) is located.

- The BROM loads the Secondary Program Loader (SPL) from some form of Non Volatile Memory (NVM).

- The SPL loads a U-Boot Flattened Image Tree (FIT) image (presumably from the same device). This FIT image contains the U-Boot binary, the Device Tree Blob (DTB) and the OpenSBI binary. This image may be combined with the SPL.

- U-Boot loads the Linux Kernel.

In the case of the VisionFive 2/JH7110, the BROM is located on 32k of onboard (on the SoC) memory which may be seen on the SoC block diagram. The BROM is what it is: a ROM (Read Only Memory) so it is impossible to update it.

The VisionFive 2 BROM uses the state of the RGPIO pins on the board to determine which attached storage device (NVM) to load the the U-Boot Secondary Program Loader (U-Boot SPL) (u-boot-spl.bin.normal.out) from. By default this is a partition with a GUID type code of 2E54B353-1271-4842-806F-E436D6AF6985. The number of this partition is irrelevant, though it is typically partition 1.

In its default configuration, the U-Boot SPL then loads the U-Boot FIT image (u-boot.itb) from partition 2 (CONFIG_SYS_MMCSD_RAW_MODE_U_BOOT_PARTITION=0x2). When formatting this partition the recommended GUID type code is BC13C2FF-59E6-4262-A352-B275FD6F7172.

The FIT image (u-boot.itb) is a combination of OpenSBI's fw_dynamic.bin, u-boot-nodtb.bin and the device tree blob (jh7110-starfive-visionfive-2-v1.3b.dtb or jh7110-starfive-visionfive-2-v1.2a.dtb).

The VisionFive 2 has a two-switch RGPIO header on the board to select the device to load the firmware from. The configurations are as follows:

| Option | RGPIO_0 | RGPIO_1 |

|---|---|---|

| Onboard QSPI Flash (factory setting) | Low | Low |

| SDIO3.0 (TF / microSD card) | High | Low |

| eMMC | Low | High |

| UART | High | High |

First start

Serial connection

The only way to interact with the board is to use a serial link as its HDMI output is not functional (yet). The following is valid for board revision 1.3B.

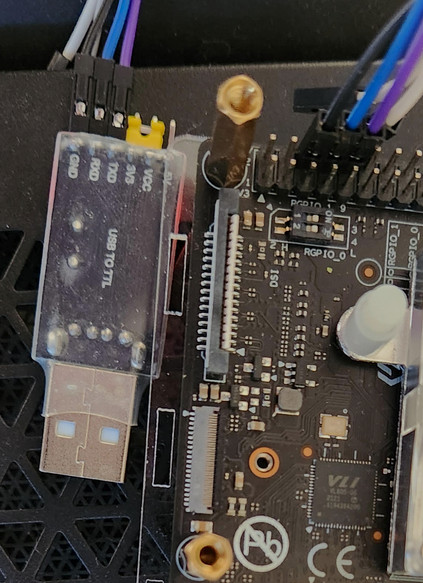

The StarFive VisionFive 2 supports a serial connection (3.3V) through its GPIO pins. Its UART is accessible via the pins 6 (GND), 8 (TX) and 10 (RX) so a crossed-over cable is required:

| StarFive VisionFive 2 (1.3B) GPIO pin | Serial adapter pin |

|---|---|

| 6 (Ground) | Ground |

| 8 (TX) | RX |

| 10 (RX) | TX |

Illustration for a 1.3B board revision with a connected USB adapter (from left to right its pins are GND - black wire, RXD - blue wire, TXD - violet wire, 3.3V + VCC - yellow jumper, 5V - not connected):

Install a communication program that can handle a serial port connection and transfer files over X-Modem or Y-Modem protocols like net-dialup/minicom (will be used for the rest of the explanations):

root #emerge --ask net-dialup/minicomThen launch Minicom by specifying the serial device to use (likely /dev/ttyUSB0):

user $minicom -D /dev/ttyUSB0 -b 115200The serial port connection parameters should be set as being:

- Speed: 115200 bps

- Data: 8 bits

- Parity: None

- Stop bits: 1 bit

- No control flow (hardware and software)

To set those parameters directly in Minicom rather than on the command line, use the keys Ctrl+A then press Z then press O when the menu shows up.

Powering up

As the board's QSPI flash already contains a firmware (preloaded at factory), it is possible to use the board when unboxing it. QSPI flash is too small to contain a full-fledged OS nevertheless it is more than enough to contain an OpenSBI/U-Boot environment (comparable to OpenBOOT for SPARC machines users).

With the serial connection setup in Minicom and the serial cable connected to the StarFive VisionFive 2 UART pins, powering the board up should lead to the following display in Minicom:

U-Boot SPL 2021.10 (Sep 28 2024 - 01:14:56 +0800) So far so good, an U-Boot command prompt beginning with StarFive # should show up at the end of the booting process. No worries for error messages like Card did not respond to voltage select! : -110 and Device 0: unknown device, they are normal at this point. Enter something like help to get a list of what valid commands are under the U-Boot shell as a test:

StarFive #helpEverything is in good working order at this point. The StarFive VisionFive 2 can be powered down by removing its USB-C power cable (safe operation, will not corrupt anything).

First aid kit

Nothing being perfect, let's take precautions. There is a recovery image available on GitHub that can be used if, for a reason or another, the onboard SPI Flash memory would become corrupt. If that would happen, the recovery image will enable you to start your StarFive VisionFive 2 and reflash it.

First things first, download the most recent recovery binary file on https://github.com/starfive-tech/Tools/tree/master/

recovery (that binary file is named jh7110-recovery-YYYYMMDD.bin, e.g. jh7110-recovery-20230322.bin).

With the StarFive VisionFive 2 board powered down, change the boot selector setting to make the board boot via its UART (i.e. serial link) rather than its SPI Flash memory: RGPIO_0 and RGPIO_1 must be both set to Low. Once the boot selector setting has been changed, power the board up again. At this point, Minicom should show a series of "C", meaning that the board is patiently waiting for its firmware image to be sent to it by Minicom via the X-Modem protocol:

U-Boot SPL 2021.10 (Sep 28 2024 - 01:14:56 +0800) (C)StarFive CCCCCCCCCCCCCCCCCCCCC

Within Minicom, transfer the recovery binary file just downloaded from Github using the said X-Modem protocol (Ctrl+A then press S then select xmodem). Once the recovery image has been transferred, the StarFive VisionFive 2 board will automatically boot on it and will present a menu from where an operation to perform can be chosen:

U-Boot SPL 2021.10 (Sep 28 2024 - 01:14:56 +0800) Do not use option 4 (OTP) unless being in good knowledge: an OTP is a One Time Programmable memory or WORM (Write-Once Read Many memory). Once an OTP memory has been programmed ("fused"), it cannot be programmed again unless using specialized equipment or procedures. OTP fuses dot not have a public documentation, see the following discussion thread: https://github.com/starfive-tech/Tools/issues/8

Options 0 and 2 can be used to reprogram the onboard SPI Flash memory to make it bootable again. Options 1 and 2 do exactly the same an eMMC module is plugged at the back of the board.

Updating the stock firmware preloaded at factory

Before attempting to install Gentoo onto the VisionFive 2 it is essential to update the firmware. Depending on the age of the firmware the current (modern) official images from StarFive may fail to load.

There are several methods for updating the firmware, including:

- Using the U-Boot console to retrieve and install firmware images via TFTP

- Invoking

flashcpcommand (from sys-fs/mtd-utils) on a running system - Sending firmware binaries over a UART console interface

- Booting updated firmware off removable media

Update from Debian

TODO

UART method

Updating U-Boot will likely reset the contents of U-Boot variables to their default value. If some of them were customized to have a fully working system it is highly advised to keep a trace of their current value before proceeding!

First, gather the following firmware files from the latest VisionFive2 software release:

| Firmware file | Base address in SPI flash memory |

|---|---|

| u-boot-spl.bin.normal.out | 0x0 |

| visionfive2_fw_payload.img | 0x100000 |

Second, calculate their CRC-32 with /usr/bin/crc32 (provided by dev-perl/Archive-Zip) on the host, results might defer fomr what is show here:

user $crc32 u-boot-spl.bin.normal.out visionfive2_fw_payload.img4f92e463 u-boot-spl.bin.normal.out 6949ec27 visionfive2_fw_payload.img

Third, ensure the StarFive VisionFive 2 is set up to boot off the onboard SPI Flash memory: both RGPIO_0 and RGPIO_1 switches must be set to High. Once done power the board on and the U-Boot command prompt should be reached after a few seconds. From that prompt there, run the following command to initialize a file transfer via the Y-Modem protocol (calculates a CRC for each chunk of transferred data to ensure no corruption has occurred):

StarFive #loady## Ready for binary (ymodem) download to 0x60000000 at 115200 bps... CCCCC

At this point, use Ctrl+A then S in Minicom to enter the file transfer menu, then select Ymodem, then a single firmware file of your choice (beginning with u-boot-spl.bin.normal.out or visionfive2_fw_payload.img is not important as long as both are processed) and wait for the transfer to complete.

StarFive #loady## Ready for binary (ymodem) download to 0x60000000 at 115200 bps... CC0(STX)/0(CAN) packets, 6 retries ## Total Size = 0x00025a30 = 154160 Bytes

At this point the firmware payload lies in board's RAM but remains to be written on the SPI flash memory. Before committing it to the SPI flash memory, check the firmware payload's CRC-32 as it is seen on the StarFive VisionFive 2 board (the result should match what has been computed on the host):

StarFive #crc32 $loadaddr $filesize$filesize is a shell variable automatically initialized when the transfer completes. A couple of shell variables like $loadaddr are preset at system startup. To see all defined variables use the shell command: printenv.

If the CRC-32 value calculated on the board matches the one calculated on the host, the firmware file contents can be comitted to the onboard SPI flash memory. Depending on which firmware file has been transferred, the command to use is:

- For u-boot-spl.bin.normal.out:

StarFive #sf update $loadaddr 0x0 $filesize- For visionfive2_fw_payload.img:

StarFive #sf update $loadaddr 0x100000 $filesizeRepeat the operations above for the second firmware file. Once both firmware files have been transferred and committed to the onboard SPI flash memory, powercycle the board and the U-Boot command prompt should show up again after a couple of seconds. In the case some badluck would have happened making the board unable to boot on the onboard SPI flash memory, it is possible to recover from the situation using recovery boot image, see section First aid kit.

Prerequisites

Prior customizing the rootFS required to boot, two steps will be involved:

- Setting up QEMU to make it handle RISC-V binaries

- Downloading an existing RISC-V Gentoo stage3 (QEMU will be embedded in that environment to be able to "natively" compile packages)

Environment variables and initial setup

Put the

export statements within a shell-script that can be on-demand sourced or within a .bash_file file.To keep things clear along the various procedures detailed in the next sections, start by defining some shell variables as they will be used all along this explanation (the dash for RISCV_XCOMPILE is not an error):

root #export RISCV_WORKDIR_PATH=/path/to/a/work/directory

root #export RISCV_ROOTFS_PATH=${RISCV_WORKDIR_PATH}/rootfs

root #export RISCV_XARCH=riscv64-unknown-linux-gnu

root #export RISCV_XCOMPILE=${RISCV_XARCH}-

root #export RISCV_XDEV_ROOT=/usr/${RISCV_XARCH}

root #export QEMU_LD_PREFIX=${RISCV_ROOTFS_PATH}

And create the directories structure where the job will done:

root #mkdir -p ${RISCV_ROOTFS_PATH}

Configure QEMU-user and binfmt (optional)

This step will deploy qemu-riscv64 inside the RISC-V root filesystem. If chrooting inside that latter is not expected to be done, this step can be by-passed entirely.

QEMU should be configured to, at least, support:

- full-system emulation (i.e. QEMU_SOFTMMU_TARGETS): RISC-V (

risc-v) - userland emulation (i.e. QEMU_USER_TARGETS): RISC-V (

risc-v)

The very first step is to check what lies inside /etc/portage/make.conf and add the required targets discussed here-before if they were missing:

/etc/portage/make.confQEMU_SOFTMMU_TARGETS="riscv64"

QEMU_USER_TARGETS="riscv64"

The next step is to ensure that app-emulation/qemu has, at least, the USE flag static-user enabled as anything outside the chroot will be inaccessible (QEMU binaries should be fully autonomous):

/etc/portage/package.use/qemuapp-emulation/qemu static-user

Now, re-emerge app-emulation/qemu and package it to be able to be re-deployed inside the chrooted target environment:

root #emerge --ask app-emulation/qemuroot #quickpkg app-emulation/qemu

It is time to register the ELF/RISC-V signature to the miscellaneous binary formats known by the Linux kernel to make the kernel invoke the adequate QEMU userland emulator when needed:

root #mount binfmt_misc -t binfmt_misc /proc/sys/fs/binfmt_misc

root #echo ':riscv64:M::\x7fELF\x02\x01\x01\x00\x00\x00\x00\x00\x00\x00\x00\x00\x02\x00\xf3\x00:\xff\xff\xff\xff\xff\xff\xff\x00\xff\xff\xff\xff\xff\xff\xff\xff\xfe\xff\xff\xff:/usr/bin/qemu-riscv64:' > /etc/binfmt.d/qemu-riscv64-static.conf

Then restart binfmt support:

- OpenRC:

root #rc-service binfmt restart - Systemd:

root #systemctl restart systemd-binfmt

At this point a pseudo-file called riscv64 should appear under /proc/sys/fs/binfmt_misc :

root #ls -l /proc/sys/fs/binfmt_misc

total 0 --w------- 1 root root 0 Nov 12 10:13 register -rw-r--r-- 1 root root 0 Nov 12 10:13 riscv64 -rw-r--r-- 1 root root 0 Nov 6 10:57 status

The pseudo-file /proc/sys/fs/binfmt_misc/riscv64 contains:

root #cat /proc/sys/fs/binfmt_misc/riscv64

enabled interpreter /usr/bin/qemu-riscv64 flags: offset 0 magic 7f454c460201010000000000000000000200f300 mask ffffffffffffff00fffffffffffffffffeffffff

Download an existing RISC-V stage 3

To see all the available images: https://gentoo.osuosl.org/releases/riscv/autobuilds

Rather than building a full cross-dev environment and the subsequent stages archives with Catalyst, Gentoo offers some pre-built tarballs for RISC-V machines that avoids some tedious steps.

To begin with, grab a Gentoo stage 3 tarball from https://www.gentoo.org/downloads/#riscv. The U74 cores of a JH7110 SoC support hardware double precision floating point operations (the letter G in RV64GC meaning IMAFD) so any of the RV64GC/LP64D stage 3 images is suitable as a starting point. On the other hand, all RV64GC/LP64 stage 3 images require no hardware double precision floating point support thus can be used on all RV64GC processors.

For the rest of the explanations, the systemd flavor of rv64gc/lp64d will be used.

To download one of the available images, a tool like net-misc/aria2 or net-misc/wget can be used. E.g.:

Generate the cross-build environment

Two steps are involved here:

- Create the cross-build environment

- Setup (link) the cross-build environment to a native RISC-V stage 3

Create the cross-build environment

Rather than using the (already outdated) version of GCC specified in the VisionFive 2 SDK crossdev may instead be used to to build an up-to-date cross-compiler from the Gentoo repository. This will then be used to bootstrap a Catalyst stage, build the kernel, and any required firmware binaries.

Install the sys-devel/crossdev package and generate a RISC-V cross toolchain (see Cross Build Environment for further information):

root #emerge --ask sys-devel/crossdevCreate an ebuild repository for crossdev, preventing it from choosing a (seemingly) random repository to store its packages:

root #mkdir -p /var/db/repos/crossdev/{profiles,metadata}

root #echo 'crossdev' > /var/db/repos/crossdev/profiles/repo_name

root #echo 'masters = gentoo' > /var/db/repos/crossdev/metadata/layout.conf

root #chown -R portage:portage /var/db/repos/crossdev

If the Gentoo ebuild repository is synchronized using Git, or any other method with Manifest files that do not include checksums for ebuilds:

/var/db/repos/crossdev/metadata/layout.confmasters = gentoo

thin-manifests = true

Instruct Portage and crossdev to use this ebuild repository:

/etc/portage/repos.conf/crossdev.conf[crossdev]

location = /var/db/repos/crossdev

priority = 10

masters = gentoo

auto-sync = no

Then build the cross-build toolchain:

root #crossdev --target ${RISCV_XARCH} Once crossdev has built the cross-toolchain, that latter will be installed to /usr/${RISCV_XARCH} (i.e. /usr/riscv64-unknown-linux-gnu in the present case). The cross-compiler may be used by prefixing the target (stored in ${RISCV_XARCH}) to the command. Hence ${RISCV_XARCH}-gcc will be expanded and run as /usr/riscv64-unknown-linux-gnu-gcc. Give this a try tho validate what kd of a cross-compiler has been generated:

root #${RISCV_XARCH}-gcc -v

Set the cross-build environment up

Now the baseline is in place, the very next step of the journey consists of establishing a transparent link between the cross-build environment and the real RISC-V which will be ultimately flashed on a media used by the StarFive VisionFive 2.

First of all, the cross-build environment is a sort of split-usr beast and must be converted to a merged-usr one to make it coherent with the real RISC-V stage 3 downloaded and extracted here-before. If not already already done, emerge sys-apps/merge-usr:

root #emerge --ask sys-apps/merge-usrThen do the conversion per se by doing a verbose first pass as a dry-run:

root #merge-usr --verbose --dryrun --root ${RISCV_XDEV_ROOT}

root #merge-usr --root ${RISCV_XDEV_ROOT}

INFO: Migrating files from '/usr/riscv64-unknown-linux-gnu/sbin' to '/usr/riscv64-unknown-linux-gnu/usr/bin' INFO: Replacing '/usr/riscv64-unknown-linux-gnu/sbin' with a symlink to 'usr/bin' INFO: Migrating files from '/usr/riscv64-unknown-linux-gnu/usr/sbin' to '/usr/riscv64-unknown-linux-gnu/usr/bin' INFO: Replacing '/usr/riscv64-unknown-linux-gnu/usr/sbin' with a symlink to 'bin' INFO: Migrating files from '/usr/riscv64-unknown-linux-gnu/lib' to '/usr/riscv64-unknown-linux-gnu/usr/lib' INFO: Replacing '/usr/riscv64-unknown-linux-gnu/lib' with a symlink to 'usr/lib' INFO: Migrating files from '/usr/riscv64-unknown-linux-gnu/lib32' to '/usr/riscv64-unknown-linux-gnu/usr/lib32' INFO: Replacing '/usr/riscv64-unknown-linux-gnu/lib32' with a symlink to 'usr/lib32' INFO: Migrating files from '/usr/riscv64-unknown-linux-gnu/lib64' to '/usr/riscv64-unknown-linux-gnu/usr/lib64' INFO: Skipping symlink '/usr/riscv64-unknown-linux-gnu/lib64/lp64d'; '/usr/riscv64-unknown-linux-gnu/usr/lib64/lp64d' already exists INFO: Replacing '/usr/riscv64-unknown-linux-gnu/lib64' with a symlink to 'usr/lib64'

Now open the file ${RISCV_XDEV_ROOT}/etc/portage/make.conf and make it looking like this:

${RISCV_XDEV_ROOT}/etc/portage/make.conf# Note: profile variables are set/overridden in profile/ files:

# etc/portage/profile/use.force (overrides kernel_* USE variables)

# etc/portage/profile/make.defaults (overrides ARCH, KERNEL, ELIBC variables)

CHOST=riscv64-unknown-linux-gnu

CBUILD=x86_64-pc-linux-gnu

ROOT=/usr/${CHOST}/

ACCEPT_KEYWORDS="${ARCH} ~${ARCH}"

USE="${ARCH}"

FEATURES="-collision-protect sandbox buildpkg noman noinfo nodoc"

# Be sure we dont overwrite pkgs from another repo..

PKGDIR=${ROOT}var/cache/binpkgs/

PORTAGE_TMPDIR=${ROOT}tmp/

# Colour in portage output, useful for debugging

# Needed for ninja (e.g. z3)

CLICOLOR_FORCE=1

# https://gitlab.kitware.com/cmake/cmake/-/merge_requests/6747

# https://github.com/ninja-build/ninja/issues/174

CMAKE_COMPILER_COLOR_DIAGNOSTICS=ON

CMAKE_COLOR_DIAGNOSTICS=ON

# Common flags for cross-compiling and colour; params pulled from -march=native

COMMON_FLAGS="-mabi=lp64d -march=rv64imafdc_zicsr_zba_zbb -mcpu=sifive-u74 -mtune=sifive-7-series -O2 -pipe -fdiagnostics-color=always -frecord-gcc-switches --param l1-cache-size=32 --param l2-cache-size=2048"

CFLAGS="${COMMON_FLAGS}"

CXXFLAGS="${COMMON_FLAGS}"

FCFLAGS="${COMMON_FLAGS}"

FFLAGS="${COMMON_FLAGS}"

At this point the cross-toolchain should be functional and emerge should report the correct parameters set a few lines ago:

root #${RISCV_XARCH}-emerge --info | grep FLAGS

CFLAGS="-mabi=lp64d -march=rv64imafdc_zicsr_zba_zbb -mcpu=sifive-u74 -mtune=sifive-7-series -O2 -pipe -fdiagnostics-color=always -frecord-gcc-switches --param l1-cache-size=32 --param l2-cache-size=2048" CXXFLAGS="-mabi=lp64d -march=rv64imafdc_zicsr_zba_zbb -mcpu=sifive-u74 -mtune=sifive-7-series -O2 -pipe -fdiagnostics-color=always -frecord-gcc-switches --param l1-cache-size=32 --param l2-cache-size=2048" FCFLAGS="-mabi=lp64d -march=rv64imafdc_zicsr_zba_zbb -mcpu=sifive-u74 -mtune=sifive-7-series -O2 -pipe -fdiagnostics-color=always -frecord-gcc-switches --param l1-cache-size=32 --param l2-cache-size=2048" FFLAGS="-mabi=lp64d -march=rv64imafdc_zicsr_zba_zbb -mcpu=sifive-u74 -mtune=sifive-7-series -O2 -pipe -fdiagnostics-color=always -frecord-gcc-switches --param l1-cache-size=32 --param l2-cache-size=2048" LDFLAGS=""

Select the system profile for the cross-build environment

If a profile is marked experimental (exp) is desired, use the

--force flag to enable it.The very next step is to define the system profile. To do so, choose amongst of the riscv/23.0 profiles:

root #PORTAGE_CONFIGROOT=${RISCV_XDEV_ROOT} eselect profile list | grep 23.0

Whatever flavor is choosen, always select a merged-usr profile (e.g 28) and not a split-usr one.

For example the following will select default/linux/riscv/23.0/rv64/lp64d (stable) which is a merged /usr layout:

root #PORTAGE_CONFIGROOT=${RISCV_ROOTFS_PATH} eselect profile set 28To choose the split /usr layout equivalent of default/linux/riscv/23.0/rv64/lp64d (stable) which is default/linux/riscv/23.0/rv64/split-usr/lp64d (stable) or 50:

root #PORTAGE_CONFIGROOT=${RISCV_ROOTFS_PATH} eselect profile set 50

Generate the bootloader (U-Boot/OpenSBI)

The section only covers how to rebuild U-Boot and OpenSBI from their official repositories, the bootloader configuration per se will be covered a bit later.

OpenSBI

It is possible to customize OpenSBI via a configuration menu by issuing:

make CROSS_COMPILE=${RISCV_XCOMPILE} PLATFORM=generic menuconfig

FW_OPTIONS=0 makes OpenSBI display a banner at system startup (the default is to hide it).

OpenSBI (SBI standing for RISC-V Supervisor Binary Interface) is an open software layer that provides runtime services for the M-Mode and thus is required by U-Boot. A short presentation in PDF to get an overview of what OpenSBI consists of can be found at https://riscv.org/wp-content/uploads/2024/12/13.30-RISCV_OpenSBI_Deep_Dive_v5.pdf

The very first step to compile OpenSBI is to clone its code repository:

root #cd ${RISCV_WORKDIR_PATH}

root #git clone https://github.com/riscv/opensbi.gitThen checkout the most recent tagged version (1.5.1 at date of writing):

root #cd opensbi

root #git tag | tail -n 1 | xargs git checkout

HEAD is now at 43cace6 lib: sbi: check result of pmp_get() in is_pmp_entry_mapped()

Then copy the default configuration for the platform named "generic" :

root #cp platform/generic/configs/defconfig .config

From there, cross-compile OpenSBI (the result is put inside the crossdev environment):

root #make CROSS_COMPILE=${RISCV_XCOMPILE} PLATFORM=generic FW_DYNAMIC=y FW_OPTIONS=0 I=${RISCV_XDEV_ROOT}/usr install

U-Boot

It is possible to customize U-Boot via a configuration menu by issuing:

make CROSS_COMPILE=riscv64-unknown-linux-gnu- menuconfigThe story is quite similar: start by stepping back in the work directory and clone the U-Boot code repository.

root #cd ${RISCV_WORKDIR_PATH}

root #git clone https://github.com/u-boot/u-boot

Inspect all tags and use the most recent. Tags of stable versions now being in the format vYYYY.MM, the following command will display what version is to be used:

root #cd u-boot

root #git tag | grep -E 'v[0-9]{4}.[0-9]{2}$' | tail -n 1

v2024.10

Then checkout the version given above:

root #git checkout v2024.10

HEAD is now at f919c3a889 Prepare v2024.10

Now, check the configs sub-directory for a file named starfive_visionfive2_defconfig:

root #find configs -name 'starfive*'

configs/starfive_visionfive2_defconfig

At this point the rest is pretty straightforward. Create a default configuration for the StarFive Vision Five 2:

root #make starfive_visionfive2_defconfig

# # configuration written to .config #

And cross-compile U-Boot:

root #make CROSS_COMPILE=${RISCV_XCOMPILE} OPENSBI=${RISCV_XDEV_ROOT}/usr/share/opensbi/lp64/generic/firmware/fw_dynamic.bin

Build a system image

The BSP (VisionFive 2 SDK) is outdated and not very actively maintained so it will not be used here. However there is valuable valuable information inside.

There are two philosophies when it comes to installing an operating system onto a SBC / embedded device such as the VisionFive 2.

The first involves writing a static system image, typically a squashfs, onto some form of media (typically eMMC, though NVMe is on the rise). The initramfs is able to load this image into RAM and use it as a rootfs; when an update is required the whole image is replaced as a single operation. There are advantages to this approach, particularly for embedded devices where users are not expected to update individual packages and recovery 'in-the-field' may be impractical, or to provide an A/B partition layout for updates. Systems configured in this way are also resilient when it comes to unexpected shutdowns as the only time that the rootfs storage volume is performing writes is when this image is being updated. This approach will be described as an embedded installation going forward and called out where possible.

The second approach involves writing a rootfs onto some accessible storage media the device and using a package manager to install and update packages as required. For a Gentoo system this approach is more flexible and allows for a more traditional Linux experience, but requires more effort to set up and maintain. This approach will be described as a traditional installation going forward and called out where possible and will be the taken path for the next sections.

The process of generating a system image for a Gentoo installation on the VisionFive 2 may be broadly described as follows:

- Check out the required files (pre-built Gentoo stage 3, OpenSBI, U-Boot, etc) ;

- Build a cross-compiler and a QEMU emulator

- Generate a Gentoo rootfs

- Generate a Linux kernel and ramdisk (U-Boot format conversion required)

- Flash the rootfs on some SD Card

- Try to boot the machine

Create the root filesystem

It is possible to build the root filesystem via two options:

- Directly use a prebuilt Gentoo stage 3 archive (the path taken here) ;

- Build all stages from scratch via [Catalyst] and a crossdev environment

Extract and prepare the pre-built stage 3 archive

Quoting the download page: Multilib stages include toolchain support for all 64-bit and 32-bit ABI and are based on lp64d. They are mostly useful for development and testing purposes.

Simply extract the stage 3 archive downloaded a few paragraphs ago:

root #cd ${RISCV_WORKDIR_PATH}

root #tar -Jxpvf stage3-*.tar.xz -C ${RISCV_ROOTFS_PATH}

From there, bind-mount the Gentoo repository. To avoid unfortunate incident it is advised to bind-mount as read-only:

root #mkdir -p ${RISCV_ROOTFS_PATH}/var/db/repos/gentoo

root #mount -o bind,ro /var/db/repos/gentoo ${RISCV_ROOTFS_PATH}/var/db/repos/gentoo

Same story for the Linux kernel but as read-write this time. To use the same kernel version in use on the host:

root #mkdir -p ${RISCV_ROOTFS_PATH}/usr/src/linux

root #mount -o bind /usr/src/linux ${RISCV_ROOTFS_PATH}/usr/src/linux

Refresh the root filesystem

Stick with the method exposed here and do not mess up with ${SYSROOT}, ${ROOT} or ${PORTAGE_CONFIGROOT} unless having the luxury of having a host system being broken beyond all repair as some critical system binaries like /lib/ld-linux.so.2 can be overwritten. If this would happen, restoring from a recent backup (e.g. virtual machine snapshot, filesystem snapshot or copy) would be the only way out!

From there two strategies are offered:

- All @system packages are cross-built as binaries packages (and stored within the cross-compilation environment in ${RISCV_XDEV_ROOT}/var/db/pkg) then re-emerged in the target environment (${RISCV_XDEV_ROOT}). This approach is interesting if rebuilding the very same target environment multiple time is expected as it can save a lot of time by avoiding re-compiling the same packages over and over again.

- All @system packages are cross-built, deployed in the target (${RISCV_ROOTFS_PATH}) environment and stored as binary packages within the target environment in ${RISCV_ROOTFS_PATH}/var/db/pkg (unless buildpkg is removed from the variable FEATURES).

To build everything within the cross-compilation environment then deploying the produced binary packages:

root #${RISCV_XARCH}-emerge --keep-going --jobs=$(nproc) -e @systemroot #ROOT=${RISCV_ROOTFS_PATH} ${RISCV_XARCH}-emerge --usepkgonly --jobs=$(nproc) -e @systemIf working directly within the target environment is preferred:

root #ROOT=${RISCV_ROOTFS_PATH} ${RISCV_XARCH}-emerge --keep-going --jobs=$(nproc) -e @systemAs a suitable bare minimum the following should be refreshed:

Any dependency hell or failure can be resolved by playing around with ${RISCV_XDEV_ROOT}/etc/portage/package.* directories content as usually done on the host system. Good luck! Once this stage is done go ahead to the next section.

Set the system profile for the target environment

Choose the same profile than the one chosen for the cross-build environment!

The very next step is to define the system profile for the target environment. The task is easy as both profiles must match: if the profile used for the cross-build environment is default/linux/riscv/23.0/rv64/lp64d (stable) then the profile to set for the target environment must also be default/linux/riscv/23.0/rv64/lp64d (stable) (or 35).

root #PORTAGE_CONFIGROOT=${RISCV_ROOTFS_PATH} eselect profile list | grep 23.0

For example the following will select default/linux/riscv/23.0/rv64/lp64d (stable) in the target environment (pay attention to the environment variable name):

root #PORTAGE_CONFIGROOT=${RISCV_ROOTFS_PATH} eselect profile set 35Prepare the RISC-V root filesystem for chrooting

Start by emerging app-emulation/qemu into the target from the pre-built binary package created in the prerequisites section:

root #ROOT=${RISCV_ROOTFS_PATH} emerge --usepkgonly --oneshot --nodeps qemu

For convenience, while being outside the target, copy the file /etc/resolv.conf in the target:

root #cp /etc/resolv.conf ${RISCV_ROOTFS_PATH}/etc

Then bind-mount all required (pseudo-)filesystems in the target:

root #cd ${RISCV_ROOTFS_PATH}

root #mount --bind /proc proc

root #mount --bind /dev dev

root #mount --bind /dev/pts dev/pts

The last step is to chroot in the target and change the root password:

root #chroot . /bin/bash --login

root #passwd root

All of the puzzle pieces are in place and incidentally the RISC-V binaries are runnable inside the chrooted environment from a X86/64 machine.

Customize and configure the RISC-V stage3

For a first time keep a lean environment: the less packages are emerged, the lower chances are to see breakages.

Some additional packages are required: sys-apps/dtc, dev-embedded/u-boot-tools and sys-kernel/dracut. Emerge them directly on the host:

root #emerge --ask sys-apps/dtc dev-embedded/u-boot-tools sys-kernel/dracutThen proceed with a typical Stage 3 customization and emerge whatever package is needed (outside of Kernel and Bootloader) as seen before:

root #${RISCV_XARCH}-emerge pkg1 pkg2 pkg3 ...

root #ROOT=${RISCV_ROOTFS_PATH} ${RISCV_XARCH}-emerge --usepkgonly pkg1 pkg2 pkg3 ...

or:

root #ROOT=${RISCV_ROOTFS_PATH} ${RISCV_XARCH}-emerge pkg1 pkg2 pkg3 ...

Build the kernel

CONFIG_SECCOMP=y is not set in the defconfig and should be manually enabled if packages such as net-libs/webkit-gtk will be installed with USE=seccomp.There are some out-of-tree kernel modules (jpu/venc/vdec) that should be installed in addition to this kernel. These modules are available from the VisionFive 2 soft_3rdpart repo, or by emerging media-video/vf2vpudev from the bingch overlay

Rebuilding the kernel from within a RISC-V chroot (+QEMU user) is awfully slow due to the QEMU overhead. Hence a cross-building approach is used here.

To not interfere with the host kernel it is advised to copy the kernel sources on the target environment rather than using a bind-mount. If that latter option is chosen ensure keeping a copy of the host kernel configuration (.config file) under hand or being able to retrieve it from a facility like the pseudo-file /proc/config.gz!

whatever option is chosen, move into ${RISCV_ROOTFS_PATH}/usr/src/linux, either:

- Create a default configuration which is sufficient for a first shot

- Use a fairly functional configuration file (config-6.12.6). This configuration has no GPU support, no HDMI output support, no DSP support, no PWM-GPIO support.

root #cd ${RISCV_ROOTFS_PATH}/usr/src/linux

root #make mrproper

root #cp starfive_visionfive2_defconfig .config

root #make olddefconfig

If wished, the configuration can be tweaked via menuconfig or nconfig (not recommended for a first attempt):

root #make -j$(nproc) ARCH=riscv menuconfig

Build the kernel and install it:

root #make -j$(nproc) ARCH=riscv CROSS_COMPILE=${RISCV_XCOMPILE} Image.gz

root #make -j$(nproc) ARCH=riscv CROSS_COMPILE=${RISCV_XCOMPILE} modules

root #make ARCH=riscv CROSS_COMPILE=${RISCV_XCOMPILE} INSTALL_PATH=${RISCV_ROOTFS_PATH}/boot install

If loadable kernel modules are enabled, build, install them and build a ramdisk image:

root #make -j$(nproc) ARCH=riscv CROSS_COMPILE=${RISCV_XCOMPILE} modules

root #make ARCHARCH=riscv CROSS_COMPILE=${RISCV_XCOMPILE} INSTALL_MOD_PATH=${RISCV_ROOTFS_PATH} modules_install

root #dracut -f --zstd --kver 6.12.6-gentoo ${RISCV_ROOTFS_PATH}/boot/initramfs-6.12.6-gentoo.zst

root #mkimage -v -A riscv -O linux -T ramdisk -C zstd -d ${RISCV_ROOTFS_PATH}/boot/initramfs-6.12.6-gentoo.zst ${RISCV_ROOTFS_PATH}/boot/initramfs-6.12.6-gentoo.uimg

Bootloader considerations

Unfortunately EXTCONF (SYSCONF) is an EFI/x86 thing and GRUB, despite supporting RISC-V, is another EFI-only thing. As the StarFive Visionfive 2 does not support EFI the only remaining option is to play with some U-Boot variables (those familiar with OpenBoot/SPARC will be in known territory). No worries, booting that way is as simple as using any sort of Linux shell.

Imaging the device

As mentioned at the beginning, the StarFive VisionFive 2 can load U-Boot via several possibilities depending on the configuration of the RGPIO switches:

- A TF (MicroSD) card (located back side)

- An eMMC card (located back side)

- Over an UART

Once U-Boot has been loaded, it can boot the Linux environment either via a local device (a TF card, an eMMC or a NVMe stick), either via TFTP. As it has been said earlier U-Boot, if configured via an extlinux configuration file, will not be able to use FIT images.

TF Card/eMMC

Not all the TF/SD Cards currently on the market are compatible with the StarFive Vision 2. Basically, any model having more than 128G capacity will fail to boot with weird errors like: dwmci_s: Response Timeout, BOOT fail,Error is 0xffffffff, etc. Consult the compatibility list: avl_list.html

The approach is very similar to the the way that aarch64 devices are imaged due to the similar boot mechanisms:

- The TF card will be partitioned using GPT as a partition scheme.

- An image will be generated manually on-disk which may then be installed onto the TF card using tools such as dd.

The JH7110 Boot User guide.pdf mentions (section 4, page 15) that:

StarFive recommends that you use 1-bit QSPI Nor Flash or UART mode since there is a low possibility that the VisionFive 2 may fail to boot in eMMC or SDIO3.0 boot mode. For more details, refer to eMMC/SDIO3.0 boot issue section in VisionFive 2 Errata Sheet.[1]

"Not recommended" means that future board or SiFive JH7110 SoC revisions might drop the capability. However it is still acceptable to use a TF/eMMC Card to boot the system for the current context. Thus, the explanations will ignore this warning.

Preparing the TF Card

The first step is to create a raw image and mount it on an available loopback block device:

root #cd ${RISCV_WORKDIR_PATH}

root #fallocate -l 8G visionfive2.img

root #losetup --find --show visionfive2.img

Now, just like a physical hard drive or SSD, the raw image has to be partitioned. In the present case four partitions will be used:

- partition 1 will contain the U-Boot SPL (Secondary Program Loader)

- partition 2 will contains U-Boot per se

- partition 3 will contain the kernel image and whatever that latter requires (e.g. an initramfs image and so on)

- partition 4 will contain the root filesystem (i.e. the Gentoo root filesystem)

If the TF card is larger than the size of the disk image (which is recommended; it saves a lot of time when imaging) resize2fs or a similar utility depending on file system may be used to resize the rootfs partition later.

According to the JH7110 Boot User guide.pdf (see pages 8 and 9), the partitioning scheme is the following:

| Offset (hex) | Length in bytes (hex) | Length in bytes (dec) | First sector # | Last sector # | GPT Partition # | Notes |

|---|---|---|---|---|---|---|

| 0x0 | 0x200 | 512 | 0 | 0 | N/A | Protected MBR |

| 0x200 | 0x200 | 512 | 1 | 1 | N/A | GPT header |

| 0x400 | 0x1FFC00 | 2096128 | 4096 | 8191 | 1 | SPL |

| 0x200000 | 0x200000 | 2097152 | 8192 | 16383 | 2 | U-Boot |

| 0x400000 | 0x400000 | 4194304 | 16384 | 614399 | 3 | Linux Kernel image and related files (i.e. /boot) |

| 0x800000 | ... | ... | 614400 | Last | 4 | Root filesystem |

Also a specific GPT partition type (UUID) is expected for all of the four partitions. Those to be used can be found in the U-Boot source code and more specifically in the file include/configs/starfive-visionfive2.h.

| GPT Partition # | GPT partition type UUID | Notes |

|---|---|---|

| 1 | 2E54B353-1271-4842-806F-E436D6AF6985 | The boot ROM will seek for a partition with that type on the medium (being the first one is not mandatory) |

| 2 | BC13C2FF-59E6-4262-A352-B275FD6F7172 | This position is hard-coded in the U-Boot binaries and have to be the second one with the default U-Boot configuration (can be changed by recompiling U-Boot) |

| 3 | EBD0A0A2-B9E5-4433-87C0-68B6B72699C7 | With the default U-Boot configuration must be an ext2, ext4 or vFAT filesystem (BTRFS and others can be supported by recompiling U-Boot). |

| 4 | 0FC63DAF-8483-4772-8E79-3D69D8477DE4 |

Putting things together, the partitioning can be done in an oneshot command:

root #sgdisk --clear \

--new=1:4096:8191 --change-name=1:"spl" --typecode=1:2E54B353-1271-4842-806F-E436D6AF6985 \

--new=2:8192:16383 --change-name=2:"uboot" --typecode=2:BC13C2FF-59E6-4262-A352-B275FD6F7172 \

--new=3:16384:614399 --change-name=3:"kernel" --typecode=3:EBD0A0A2-B9E5-4433-87C0-68B6B72699C7 \

--new=4:614400:0 --change-name=4:"root" --typecode=4:0FC63DAF-8483-4772-8E79-3D69D8477DE4 \

/dev/loop0

Creating new GPT entries in memory. Warning: The kernel is still using the old partition table. The new table will be used at the next reboot or after you run partprobe(8) or kpartx(8) The operation has completed successfully.

Execute partprobe as suggested:

root #partprobe -s /dev/loop0

Now check and confirm what happened (forget the column Code which shows a shortened partition type):

root #sgdisk -p /dev/loop0

Disk /dev/loop0: 16777216 sectors, 8.0 GiB Sector size (logical/physical): 512/512 bytes Disk identifier (GUID): 94F6E0D1-F4B0-4585-A440-FC79A958A148 Partition table holds up to 128 entries Main partition table begins at sector 2 and ends at sector 33 First usable sector is 34, last usable sector is 16777182 Partitions will be aligned on 2048-sector boundaries Total free space is 4062 sectors (2.0 MiB) Number Start (sector) End (sector) Size Code Name 1 4096 8191 2.0 MiB FFFF spl 2 8192 16383 4.0 MiB EA00 uboot 3 16384 614399 292.0 MiB 0700 kernel 4 614400 16777182 7.7 GiB 8300 root

All partitions matching what they should be in size and boundaries, U-Boot and SPL will be taken care of (in the next section).

Write the SPL and U-Boot

It is possible to combine the SPL and U-Boot into a single image. This will require a different partition layout but should otherwise be similar to this example. This is not covered here, but should be. Please help out by updating the wiki!

The SPL and U-Boot may be both directly dumped to the image using dd:

root #cd ${RISCV_WORKDIR_PATH}

root #dd if=u-boot/spl/u-boot-spl.bin.normal.out of=/dev/loop0p1

root #dd if=u-boot/u-boot.itb of=/dev/loop0p2Incorporate the root filesystem to the media image

The boot partition may be formatted as FAT32/VFAT with mkfs.fat, and the root partition as ext4 with mkfs.ext4. The following commands may be used to format and mount the partitions:

root #mkfs.fat -F 32 -n ROOT /dev/loop0p3

root #mkfs.ext4 -L root /dev/loop0p4

Creating filesystem with 2020347 4k blocks and 505920 inodes

Filesystem UUID: 68acdb19-5d85-4401-a9e6-033e5b4a357d

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632

Allocating group tables: done

Writing inode tables: done

Creating journal (16384 blocks): done

Writing superblocks and filesystem accounting information: doneNext, mount the root and boot filesystems (here under /mnt//mnt/visionfive2 and copy the whole content located in ${RISCV_ROOTFS_PATH}:

root #mount /dev/loop0p4 /mnt/visionfive2

root #mkdir -p /mnt/visionfive2/boot

root #mount /dev/loop0p3 /mnt/visionfive2/boot

root #rsync -aH --progress --delete-before --exclude=/dev --exclude=/proc --exclude=/sys --exclude=/var/tmp ${RISCV_ROOTFS_PATH}/ /mnt/visionfive2/

Edit /mnt/visionfive2/etc/fstab to cater for any file systems that need to be mounted on the device. A typical content is the following:

/mnt/visionfive2/etc/fstabLABEL=BOOT /boot vfat defaults 0 1

UUID=68acdb19-5d85-4401-a9e6-033e5b4a357d / ext4 defaults 0 1The UUID 68acdb19-5d85-4401-a9e6-033e5b4a357d comes from what mkfs.ext4 returned on the Filesystem UUID line.

Then unmount the image:

root #umount -R /mnt/visionfive2

root #losetup -d /dev/loop0

Transfer the image to a TF card

Depending on what is used for reading/writing SD Cards the name of the device to dump the image on can vary:

- /dev/mmcblkX: usually for a device directly connected to a PCI/PCIe bus

- /dev/sdX: usually for a USB device

Simply dump the image using the following command upfront:

root #dd if=visionfive2.img of=/dev/mmcblk0 bs=4M oflag=sync status=progressNow the secondary GPT has to be moved to the actual end of the TC/SD Card. The strategy is to delete the last partition then recreate it, and finally resize the file system. Fortunately sgdisk can do that automatically with its option -e:

root #sgdisk -e /dev/mmcblk0

root #sgdisk -d 4 /dev/mmcblk0

root #sgdisk --new=4:614400:0 --change-name=4:"root" --typecode=4:0FC63DAF-8483-4772-8E79-3D69D8477DE4 /dev/mmcblk0

root #partprobe /dev/mmcblk0Check to see what happened:

root #sgdisk -p /dev/mmcblk0

Disk /dev/sdd: 62333952 sectors, 29.7 GiB Model: STORAGE DEVICE Sector size (logical/physical): 512/512 bytes Disk identifier (GUID): 429D17C2-A779-4D47-B7C9-49820D387418 Partition table holds up to 128 entries Main partition table begins at sector 2 and ends at sector 33 First usable sector is 34, last usable sector is 62333918 Partitions will be aligned on 2048-sector boundaries Total free space is 4062 sectors (2.0 MiB) Number Start (sector) End (sector) Size Code Name 1 4096 8191 2.0 MiB FFFF spl 2 8192 16383 4.0 MiB EA00 uboot 3 16384 614399 292.0 MiB 0700 kernel 4 614400 62333918 29.4 GiB 8300 root

This time the fourth partition where the root filesystem is has the correct size. Last but not least, as the fourth partition is using an ext4 filesystem, the latter can be extended with resize2fs:

root #resize2fs /dev/mmcblk0p4

Resizing the filesystem on /dev/mmcblk0p4 to 7714939 (4k) blocks. The filesystem on /dev/sdd4 is now 7714939 (4k) blocks long.

Booting the device

Allright! The T-Time has come and it is time to boot the StarFive VisionFive 2. Once U-Boot is running, it is possible to load system images and start the Linux kernel via a couple of ways:

- On a TF/SD Card or eMMC

- On a NVMe stick

- Via BOOTP/TFTP (PXE)

TF/SD Card

Again a reminder: not all TC/SD Cards are compatible with the board especially those having more than 128G. If you have weird boot errors (or if nothing seems to happen at all), check for that compatibility issue first.

Now:

- power the StarFive VisionFive 2 down

- ensure the boot selection switches are in the position: RGPIO_0 = Low, RGPIO_1 = High

- Insert the TC/SD card in the StarFive VisionFive 2's TC/SD card slot

- run minicom using the correct TTY device (e.g. ttyUSB0) and settings (speed = 115200 bps with 8 bits, no parity, 1 stop bit => 8N1 which is the default) =>

minicom -D /dev/ttyUSB0 -b 115200 - (Fingers crossed!) power the StarFive VisionFive 2 up

If all is in order the StarFive VisionFive 2 should boot:

U-Boot SPL 2024.10 (Dec 15 2024 - 12:54:37 -0500) Once the U-Boot shell is reached check if all of the partitions are seen and the very first step of this is to list the MMC devices:

StarFive #mmc list

mmc@16010000: 0 mmc@16020000: 1 (SD)

Now switch to the device identified with (SD):

StarFive # mmc dev 1

switch to partitions #0, OK mmc1 is current device

Then list the partitions on it:

StarFive #mmc part

To finish try a ls on the third (FAT32/VFAT) and the fourth (ext4) partitions:

StarFive # ls mmc 1:3

3146435 System.map-6.12.2-gentoo 8144522 vmlinuz-6.12.2-gentoo 2 file(s), 0 dir(s)

StarFive #ls mmc 1:4

At this point the worst is behind:

- The board recognize the TC/SD card and can boot on it

- U-Boot sees what it must see

Last detour before heading the rest for the sake of the demonstration:

StarFive #fdt print /binman

This reflects what has been written on the first two partitions of the medium (/dev/loop0p1 and /dev/loop0p2) in previous sections.

Do not use the DTB build by U-Boot! The kernel will start but will panic when spawing /sbin/init. Always use the DTB coming with the Linux kernel.

From the U-Boot shell, if no initramfs (i.e. no kernel module) is used, the following commands load and start a Linux kernel:

StarFive #load mmc 1:3 $fdt_addr_r jh7110-starfive-visionfive-2-v1.3b.dtb

38025 bytes read in 6 ms (6 MiB/s)

StarFive #load mmc 1:3 $kernel_addr_r Image

21627364 bytes read in 1316 ms (15.7 MiB/s)

StarFive #env set bootargs console=ttyS0,115200 rootwait earlycon=sbi root=/dev/mmcblk1p4 rw

StarFive #booti $kernel_addr_r - $fdt_addr_r

## Flattened Device Tree blob at 46000000 Booting using the fdt blob at 0x46000000 Working FDT set to 46000000 Loading Device Tree to 00000000fe6ea000, end 00000000fe6f6488 ... OK Working FDT set to fe6ea000 Starting kernel ... [ 0.000000] Linux version 6.12.4-gentoo-r1 (root@oxygen.universe.lan) (riscv64-unknown-linux-gnu-gcc (Gentoo 14.2.1_p20241116 p3) 14.2.1 20241116, GNU ld (Gentoo 2.43 p3) 2.43.1) #2 SMP Wed Dec 18 09:55:15 EST 2024 [ 0.000000] Machine model: StarFive VisionFive 2 v1.3B [ 0.000000] SBI specification v2.0 detected [ 0.000000] SBI implementation ID=0x1 Version=0x10005

In the case of a systemd based system: messages complaining about "failed to chase symlink" might appear. They are harmless and can be ignored.

Once the boot process completes, a login prompt should be reached:

This is StarFive (Linux riscv64 6.12.4-gentoo-r1) 13:27:11

StarFive login:

Et voila! The Gentoo Linux environment on TC/SD card is fully functional, keep the image used to flash the TC/SD Card aside to allow a reproduction on demand of that Gentoo environment. Congratulations! So what's next?

eMMC

The TF/SD-Card is a cheap convenient method to start with nevertheless is has an irritating aspect: the speed! It can only reaches at best around 15MB/s or so even with fast SD Cards, even with high-end UHS-II/V60 micro-SD cards. A much more more pleasant way to work is to use an eMMC chip which can speed things up.

The Starfive Vision Five 2 can virtually accept any brand of eMMC (Orange Pi 5 eMMC are known to work) as long as they have a single or a double B2B connector. Pay attention to the orientation: only one of the two B2B connectors at the back of the board is wired to the rest of the board, the other not being connected to anything (its sole goal is to reinforce the mechanical stability). Simply follow the markings on the PCB:

- The B2B connector near the J99 label is the live connector

- The B2B connector near the J9 label is the dummy connector (not connected to anything)

Ensure to correctly snap the connector in place: a click must be heard without putting too much force.

Now the eMMC has been put on its socket two strategies can be followed:

- Write from the host PC directly on the eMMC card via a eMMC/SD Card adaptator having B2B connector

- Transfer the content from the (functional) TF/SD card to the eMMc

Whatever approach is chosen it is worth noticing that, per standards, an eMMC card has two hardware partitions (they can't be removed) that play the very same role than the first two partitions of the SD Card (i.e. where the U-Boot SDL and U-Boot itself resides). Both have a 4 MB size and are respectively named boot0 and boot1 prefixed by their MMC slot name:

root@StarFive #lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS mmcblk1 179:0 0 59.5G 0 disk ├─mmcblk1p1 179:1 0 2M 0 part ├─mmcblk1p2 179:2 0 4M 0 part ├─mmcblk1p3 179:3 0 292M 0 part /boot └─mmcblk1p4 179:4 0 23.7G 0 part / mmcblk0 179:8 0 233G 0 disk mmcblk0boot0 179:16 0 4M 1 disk mmcblk0boot1 179:24 0 4M 1 disk

On the example above, /dev/mmcblk1 is the SD Card slot whereas /dev/mmcblk0 is the eMMC slot (default settings if the device tree has not been modified from its vanilla form). Also another block device not listed here is /dev/mmcblk0rpmb. That latter is known as the Replay Protected Memory Block (RPMB) and can be set aside for the rest of the explanations.

From the SD Card to the eMMC card

A word about those mmcblk0bootX hardware partitions before moving on: they are too short for us (4 MiB = 8192 sectors) so none of them will be used here so the process is straightforward to what has been previously shown. First things first: partition the eMMC!

root@StarFive #sgdisk --clear \

--new=1:4096:8191 --change-name=1:"spl" --typecode=1:2E54B353-1271-4842-806F-E436D6AF6985 \

--new=2:8192:16383 --change-name=2:"uboot" --typecode=2:BC13C2FF-59E6-4262-A352-B275FD6F7172 \

--new=3:16384:614399 --change-name=3:"kernel" --typecode=3:C12A7328-F81F-11D2-BA4B-00A0C93EC93B \

--new=4:614400:0 --change-name=:"root" --typecode=4:0FC63DAF-8483-4772-8E79-3D69D8477DE4 \

/dev/mmcblk0

Then refresh the system knowledge about their existence:

root@StarFive #partprobe -s /dev/mmcblk0

Dump U-Boot and its SPL loader on the first two partitions:

root@StarFive #dd if=/dev/mmcblk1p1 of=/dev/mmcblk0p1 oflag=sync bs=1M

root@StarFive #dd if=/dev/mmcblk1p2 of=/dev/mmcblk0p2 oflag=sync bs=1M

Now respectively create a vfat and an ext4 on the last two partitions as seen before:

root@StarFive #mkfs.vfat /dev/mmcblk0p3

root@StarFive #mkfs.ext4 /dev/mmcblk0p4

Mount both of those filesystems:

root@StarFive #mkdir /mnt/gentoo

root@StarFive #mount /dev/mmcblk0p4 /mnt/gentoo

root@StarFive #mount /dev/mmcblk0p3 /mnt/gentoo/boot

The remaining work to do is to copy the root filesystem and the contents of /boot. Two options:

- Create a tarball on the host, copy it on a USB key then plug the USB key in one of the USB ports of the StarFive Visionfive 2 and finally untar the tarball (cold copy)

- The ugly and reckless way: rsync the live filesystems onto the eMMC (warm/hot copy) even if some files are opened (2-3 attempts can be made if rsync complains).

Do not dump live filesystems via a dd command as used for copying U-Boot!

- USB key method:

- Tarball creation on the host:

cd ${RISCV_WORKDIR_PATH} && tar -zcvpf rootfs-svf2.tar.gz --one-file-system rootfs - Tarball extraction on the StarFive Visionfive 2:

tar -zxvpf rootfs-20250101.tar.gz --strip-component=1 -C /mnt/gentoo rootfs.tar.gz

- Tarball creation on the host:

- Live filesystems copy method:

- On the StarFive Visionfive 2:

rsync -aH --progress --delete-before --exclude=/proc --exclude=/sys --exclude=/dev --exclude=/run --exclude=/mnt / /mnt/gentoo/ - Repeat again one or two more times in case of

- On the StarFive Visionfive 2:

Before rebooting: check /etc/fstab for devices referenced as /dev/mmcblk1pX and change the path for /dev/mmcblk1pX. Then refresh the information known by systemd if applicable:

root@StarFive #systemctl daemon-reload

And reboot!

root@StarFive #reboot

From the host to the eMMC card

The approach is the same used for the SD Card describe a few paragraphs ago:

- Put the eMMC card on a USB-eMMC adapter having B2B connectors

- Partition the eMMC like hereabove for the SD Card

- Copy the U-Boot stuff on the first two partitions

- Copy the rootfilesystem

- And... reboot!

Back in U-Boot shell

Once arrived at the U-Boot shell start by ejecting the SD-Card from its slot and keep it aside in case of as a Gentoo environment is "alive" there. Very handy in case of the Gentoo environment residing on the eMMC would be broken for a reason or another.

Change the variable bootargs to make Linux use the eMMC card rather than the SD Card:

StarFive #env save

Suggestion to render things a bit simpler by avoiding typing or copying/pasting the same long commands over and over again:

StarFive #env set load_gentoo 'load mmc 0:3 ${fdt_addr_r} jh7110-starfive-visionfive-2-v1.3b.dtb; load mmc 0:3 ${kernel_addr_r} vmlinuz-${gentoo_kernel_version}; load mmc 0:3 ${ramdisk_addr_r} initramfs-${gentoo_kernel_version}.uimg'

StarFive #env set start_gentoo 'booti ${kernel_addr_r} ${ramdisk_addr_r} ${fdt_addr_r}'

StarFive #env save

Saving Environment to SPIFlash... Erasing SPI flash...Writing to SPI flash...done OK

Then to start Gentoo:

This not done yet! The boot configuration must be changed via the boot selector DIP switches.

Flip the switch!

The very last thing to change is to flip the boot selector switches to tell the board to boot on the eMMC rather than teh SD Card. Power the board down first!

Once the board is unplugged from any current source simply set the following configuration for the boot selector switch:

- RGPIO_0: Low

- RGPIO_1: High

Remove the SD Card from the board then power the board back on (fingers crossed): the SBI startup banner should appear followed by a U-Boot shell.

Congratulations ! The Starfive Visionfive 2 is bootable and usable with only the eMMC card.

Known / encountered issues

init[1]: unhandled signal 4 code 0x1 at (…) in ld-linux-riscv64-lp64d.so.1

Symptom: the kernel starts but panics while trying to run /sbin/init like below

Starting kernel ... sbi_trap_error: hart0: trap0: load fault handler failed (error -2) sbi_trap_error: hart0: trap0: mcause=0x0000000000000005 mtval=0x0000000040050088 sbi_trap_error: hart0: trap0: mepc=0x00000000400085ca mstatus=0x0000000200001800 sbi_trap_error: hart0: trap0: ra=0x000000004000f40e sp=0x000000004004fef0 sbi_trap_error: hart0: trap0: gp=0x0000000000000000 tp=0x0000000040050000 sbi_trap_error: hart0: trap0: s0=0x000000004004ff00 s1=0x0000000040050000 sbi_trap_error: hart0: trap0: a0=0x0000000040050088 a1=0x0000000000000002 sbi_trap_error: hart0: trap0: a2=0x0000000000000000 a3=0x0000000000000019 (...) [ 4.953256] EXT4-fs (mmcblk1p4): mounted filesystem 3b5a1824-0f83-49d2-a3d5-2698a6e83dd9 r/w with ordered data mode. Quota mode: disabled. [ 4.965782] VFS: Mounted root (ext4 filesystem) on device 179:4. [ 4.971897] devtmpfs: mounted [ 4.978632] Freeing unused kernel image (initmem) memory: 3652K [ 4.984674] Run /sbin/init as init process [ 5.052665] init[1]: unhandled signal 4 code 0x1 at 0x0000003f9f9cc6dc in ld-linux-riscv64-lp64d.so.1[146dc,3f9f9b8000+20000] [ 5.063994] CPU: 3 UID: 0 PID: 1 Comm: init Tainted: G T 6.12.4-gentoo-r1 #2 [ 5.072429] Tainted: [T]=RANDSTRUCT [ 5.075915] Hardware name: starfive,visionfive-2-v1.3b (DT) [ 5.081482] epc : 0000003f9f9cc6dc ra : 0000003f9f9b90f8 sp : 0000003fc2b27760 [ 5.088699] gp : 0000000000000000 tp : 0000000000000000 t0 : 0000000000000008 [ 5.095915] t1 : 0000003f9f9dbe98 t2 : 0000000000000008 s0 : 0000003fc2b27e60 [ 5.103131] s1 : 0000002ad0d9d559 a0 : 0000003fc2b27798 a1 : 0000000000000000 [ 5.110347] a2 : 0000003fc2b279b8 a3 : 0000002ad0d9d568 a4 : 0000003f9f9db0c8 [ 5.117562] a5 : 0000003fc2b27788 a6 : ffffffffffffffff a7 : 0000000000000030 [ 5.124778] s2 : 0000002ad0db0408 s3 : 0000003fc2b27900 s4 : 0000003f9f9db260 [ 5.131994] s5 : 0000003f9f9db260 s6 : 0000000000000000 s7 : 0000000000000000 [ 5.139209] s8 : 0000000000000000 s9 : 0000000000000001 s10: 0000003f9f9d9ca0 [ 5.140462] mmc0: Failed to initialize a non-removable card [ 5.146423] s11: 0000003f9f9d9cc0 t3 : 0000002ad0d9d5e0 t4 : 0000003f9f9dbe90 [ 5.146431] t5 : 000000000000003b t6 : 00000000003dd1fb [ 5.146436] status: 0000000200000020 badaddr: 000000000000b920 cause: 0000000000000002 [ 5.146451] Code: 3423 0585 3823 0595 3c23 05a5 3023 07b5 3423 0625 (b920) bd24 [ 5.179896] Kernel panic - not syncing: Attempted to kill init! exitcode=0x00000004

- Cause: The S7 HART has been used rather than one of the U74 HARTs. The U-Boot device tree does not disable the U7 HART whereas the Linux kernel one does. Consequence: the Linux kernel is booting on the S7 HART which does not support the LP64D ABI hence the traps and the subsequent kernel panic.

- Remedy:

- Load the Linux kernel DTB jh7110-starfive-visionfive-2-v1.3b.dtb located in arch/riscv/boot/dts/starfive rather than u-boot.dtb from the U-Boot shell.

- If the above file is not present, ensure to check:

Device Drivers --->

-*- Device Tree and Open Firmware support --->

[ ] Device Tree runtime unit tests

[*] Build all Device Tree Blobs

[*] Device Tree overlays

Repetitive NVMe QID timouts / reset controller

Symptom: Messages similar to those below are reported on the system console / system logs:

nvme nvme0: I/O 0 (I/O Cmd) QID 1 timeout, aborting nvme nvme0: I/O 0 QID 1 timeout, reset controller

- Causes: Multiples causes have been reported:

- An instable or too "weak" power supply ;

- The NVMe is using the 512 bytes/sector format (puts a strain on the controller due to a higher number of I/O requests);

- Remedy:

- Power supply issue: try with a "beefier" power supply. The Starfive Visionfive, via its USB-C port, can sustain up to 20V and is able to negociate with PD (Power Delivery) and a QC 2.0/3.0 power supplies. Refer to the section 4.1 for details;

- NVMe sector size issue: Check what LBA formats are supported and switch to a 4K profile using the

nvmecommand

Useful notes

Some useful notes that may be of interest to the reader can be found below.

Musl

This example uses a glib libc. It is possible to use musl libc as the systems C library. The TL;DR is:

- Use the tuple riscv64-unknown-linux-musl instead of riscv64-unknown-linux-gnu wherever crossdev is in use.

- Obtain (or build) any lp64d musl stage3 tarball and use that.

- Select an appropriate musl profile.

Faster installation

Anywhere that QEMU-user is invoked to build a cross-arch package, using Portage within a chroot may be replaced with an external installation utilizing crossdev to cross-compile binaries and portage to install them into the image as follows:

root #riscv64-unknown-linux-gnu-emerge --ask sys-kernel/dracut

root #cd rootfs

root #ROOT=$PWD/ riscv64-unknown-linux-gnu-emerge --ask --usepkgonly --oneshot sys-kernel/dracut

It will be faster to cross-compile packages and install them into the image than to use QEMU-user to build them within the chroot, though this is not the preferred approach.

make.conf

Some useful additions for cross-compiling packages and identifying breakage in failed package builds:

/etc/portage/make.conf# Colour in portage output, useful for debugging

# Needed for ninja (e.g. z3)

CLICOLOR_FORCE=1

# https://gitlab.kitware.com/cmake/cmake/-/merge_requests/6747

# https://github.com/ninja-build/ninja/issues/174

CMAKE_COMPILER_COLOR_DIAGNOSTICS=ON

CMAKE_COLOR_DIAGNOSTICS=ON

# Common flags for cross-compiling and colour; params pulled from -march=native

COMMON_FLAGS="-mabi=lp64d -march=rv64imafdc_zicsr_zba_zbb -mcpu=sifive-u74 -mtune=sifive-7-series -O2 -pipe -fdiagnostics-color=always -frecord-gcc-switches --param l1-cache-size=32 --param l2-cache-size=2048"

# Enable QA messages for from iwdevtools

PORTAGE_ELOG_CLASSES="${PORTAGE_ELOG_CLASSES} qa"

Clearing the U-Boot environment configuration

The configuration is stored within the QSPIFlash:

- Start address: 0xF0000 => CONFIG_ENV_OFFSET=0xF0000

- Length: 64KiB (65536 bytes) => CONFIG_ENV_SIZE=0x10000

No magic here: those values comes from the U-Boot .config file.

The trick is: as the QSPIFlash content is not 1:1 mapped in the CPU addressable space (only the QSPIFlash control registers are) it is required to go via the sf commands (instead of the mw command) like below:

StarFive #sf probeSF: Detected gd25lq128 with page size 256 Bytes, erase size 4 KiB, total 16 MiB

Then:

StarFive #sf erase 0xf0000 0x10000SF: 65536 bytes @ 0xf0000 Erased: OK

See also

- RISC-V hardware list — a list of RISC-V hardware owned by the Gentoo community within the #gentoo-riscv (webchat) channel.

- Embedded Handbook — a collection of community maintained documents providing a consolidation of embedded and SoC knowledge for Gentoo.

External resources

- All public StarFive documents archive

- Upstream documentation

- Upstream build system.

- Upstream kernel support tracking

- Andrew's Experimental Gentoo Image discussion on rvspace.org

- Updating firmware via UART

- VF2 Technical Reference Manual

- U-Boot VF2 documentation

- U-Boot booting documentation

- RISC-V Standard Extensions